In the latest of our Guest Author articles, Vin Mitty takes a deeper look at AI-driven training personalization and how we can use it to revolutionize L&D.

Corporate training has been a check-the-box exercise for years. AI didn’t break it, and the issues we are facing aren’t new. AI just finally pulled back the curtain on how much time we’ve been wasting.

AI Didn’t Break Corporate Training. We Did.

Way before AI became a buzzword, employees were already zoning out during mandatory training. And it is mostly because the training they are getting has almost nothing to do with the actual fire they need to put out five minutes after the module ends.

On paper, everything looks great. The L&D dashboard is glowing green, and completion rates are through the roof. But then those same people get back to their desks and need to face the real-world nuances, and they freeze. In a regulated industry, that “freeze” is expensive. It leads to bad calls, unnecessary escalations, and a total lack of confidence and productivity.

Research has shown this isn’t new. Studies highlighted in Harvard Business Review have consistently found that most corporate training fails to transfer into real on-the-job behavior once employees return to work. Completion doesn’t equal competence.

AI didn’t create this gap; it just turned the lights on.

The “Training Data Dump” Problem

For decades, we’ve treated workplace learning like a funnel: we just keep pouring information into people’s heads and hoping some of it sticks. We take a policy, turn it into 40 slides, and call it “training”.

This assumes people fail because they lack information. But in my experience, people fail because they lack a clear direction.

I’ve seen this play out a thousand times in legal, government, and other compliance-heavy environments. People pass the quiz with flying colors. Then, the moment a client asks a question that doesn’t fit the “perfect” scenario from the training, they panic. They don’t trust themselves to use the tools they were just “trained” on. From the outside, it looks like they’re resisting change. In reality, they’ve been set up to fail.

This disconnect mirrors a broader shift toward skills-based learning, where the focus moves away from content delivery and toward real-world capability.

Regulated Industries Don’t Forgive Guessing

The “learning by doing” model doesn’t always work in a regulated space; it’s actually terrifying. Policies change every other week, but the training modules are updated only once a year. There is little room for risk and mistakes

When learning modules are designed in separate silos from real work, they just become a hindrance. People comply because they have to, not because it helps them. Over time, you lose trust and you lose productivity. People stop believing that the organization actually wants to help them get better; they think the organization just wants to cover its own tracks.

What This Looks Like on a Real Tuesday

Here’s how this usually plays out in the real world.

An employee finishes their annual compliance training. They passed the quiz. The dashboard is green. Everyone moves on. Then, a week later, a real situation shows up. A customer asks a question that sits in a gray area. It’s not illegal, but it’s not clearly allowed either. The training never covered this version of the problem.

So the employee freezes…

Not because they’re lazy. Not because they didn’t pay attention. But because the training taught rules, not judgment. In regulated environments, guessing feels dangerous. People worry about audits, escalations, and personal liability. So instead of acting, they escalate. Or they overcorrect. Or they delay.

I’ve seen this pattern repeatedly in legal, compliance, and other high-stakes environments. What looks like resistance or lack of confidence is often a perfectly rational response to poorly designed learning. When the cost of being wrong is high, people default to safety. Training that lives outside the workflow doesn’t help when decisions need to happen in the moment.

This is the gap AI is exposing. Not because AI is smarter, but because it shows us how little support people actually have when it matters.

What AI is Actually For (Hint: It’s Not Just Content)

There’s a lot of hype about AI automating the trainer’s job. Honestly? That’s missing the point.

I believe the real value of AI isn’t in making more content; it’s in getting the right guidance to show up exactly when the work is actually happening. AI-driven training personalization – real-time, personalized guidance is what AI can unlock.

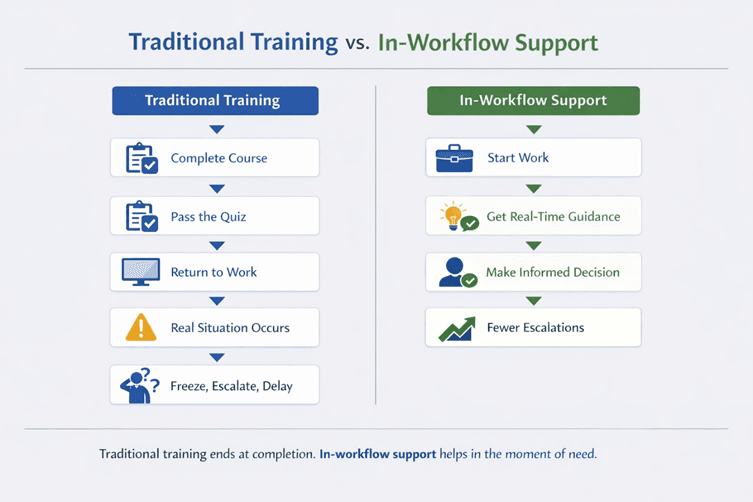

Traditional Training vs AI-Driven In-Workflow Support: Traditional training optimizes for completion. In-workflow support optimizes for better decisions when work is happening.

In a regulated environment, people don’t need more slides. They need a “safety net”, something like AI-driven training personalization that surfaces specific guidance exactly when they’re making a risky call. AI can spot the patterns of where people are actually getting stuck, rather than relying on those “How did we do?” surveys where everyone clicks 5-stars so they can go back to work.

This is where performance support systems matter more than delivering another learning module.

Stop Measuring What’s Easy to Count

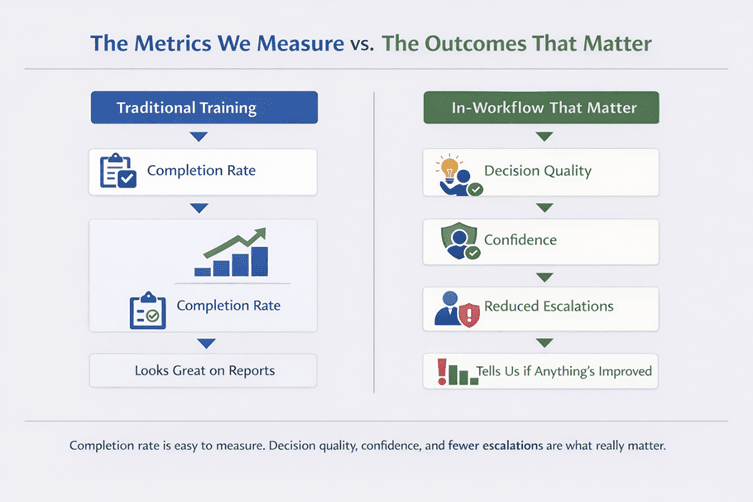

L&D teams love completion rates because they’re easy to report to the board. But “did they finish the course?” is a useless metric.

The conversation gets much more interesting when you connect learning to actual operational data. Are escalations actually dropping? Is the time-to-productivity for new hires shrinking?

Those signals are messier than a completion percentage, but they’re the only ones that matter. OEB and other industry researchers have increasingly emphasized that in-workflow enablement and guidance outperform traditional course-first models when we look at real outcomes. AI helps here by connecting the dots across several systems, giving us a view of whether people are getting better at their jobs.

Metrics vs Outcomes: Completion rates are easy to count. Decision quality, confidence, and reduced escalations are harder to measure, but far more meaningful.

The Shift L&D Teams Are Struggling to Make

In the world of compliance and regulation, training ROI isn’t usually flashy. It’s quiet.

It’s the sound of a team that doesn’t have to second-guess every email. Energy is saved when people don’t have to build manual workarounds because they actually trust the system.

To be fair, this isn’t easy for learning teams.

For years, success in L&D meant producing content. Courses. Modules. Certifications. That’s what budgets, tools, and incentives were built around. When AI-driven training personalization enters the picture and starts questioning the value of static content, it can feel threatening. If learning isn’t about courses anymore, then what exactly is the job?

The answer isn’t “less learning.” It’s different learning.

The shift is from publishing information to supporting decisions. From asking “Did they complete the training?” to asking “Did they make a better call when it counted?” That requires closer ties to operations, messier data, and a willingness to measure training outcomes that don’t fit neatly into dashboards.

AI doesn’t replace L&D. It raises the bar. It forces learning to prove its value where it always claimed it lived: on the job, under pressure, in the middle of real work.

And that shift, while uncomfortable, is long overdue.

Learning is a Support System, Not a Library

The biggest shift we need to make is more of a mindset shift and not technical. Learning isn’t a destination or a mandated event. It’s a part of the infrastructure, just like your CRM or your data stack.

AI can get us there, but only if we stop acting like content publishers and start acting like builders of support systems. The goal is to help a human being make a better decision when things get messy.

When you build support directly into the workflow with AI-driven training personalization, you don’t have to push adoption anymore. It just happens.

A data and AI leader with over 15 years of experience helping organizations move from analytics ambition to real business impact. He advises executives on AI adoption and decision-making, is an AI in Education Advocate, and hosts the Data Democracy podcast. As the Senior Director of Data Science and AI at LegalShield he leads their enterprise-scale AI and machine learning initiatives.